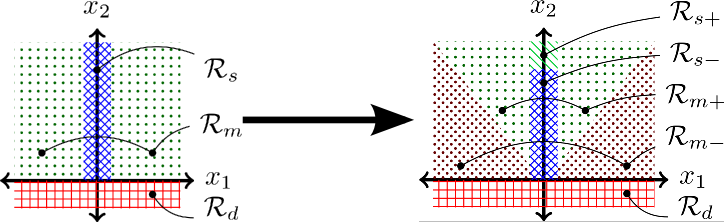

We present a new algorithm for task and motion planning (TMP) and discuss the requirements and abstractions necessary to obtain robust solutions for TMP in general. Our Iteratively Deepened Task and Motion Planning (IDTMP) method is probabilistically-complete and offers improved performance and generality compared to a similar, state-of-the-art, probabilistically-complete planner. The key idea of IDTMP is to leverage incremental constraint solving to efficiently add and remove constraints on motion feasibility at the task level. We validate IDTMP on a physical manipulator and evaluate scalability on scenarios with many objects and long plans, showing order-of-magnitude gains compared to the benchmark planner and a four-times self-comparison speedup from our extensions.

RSS 2018 Workshop on Exhibition and Benchmarking of Task and Motion Planners

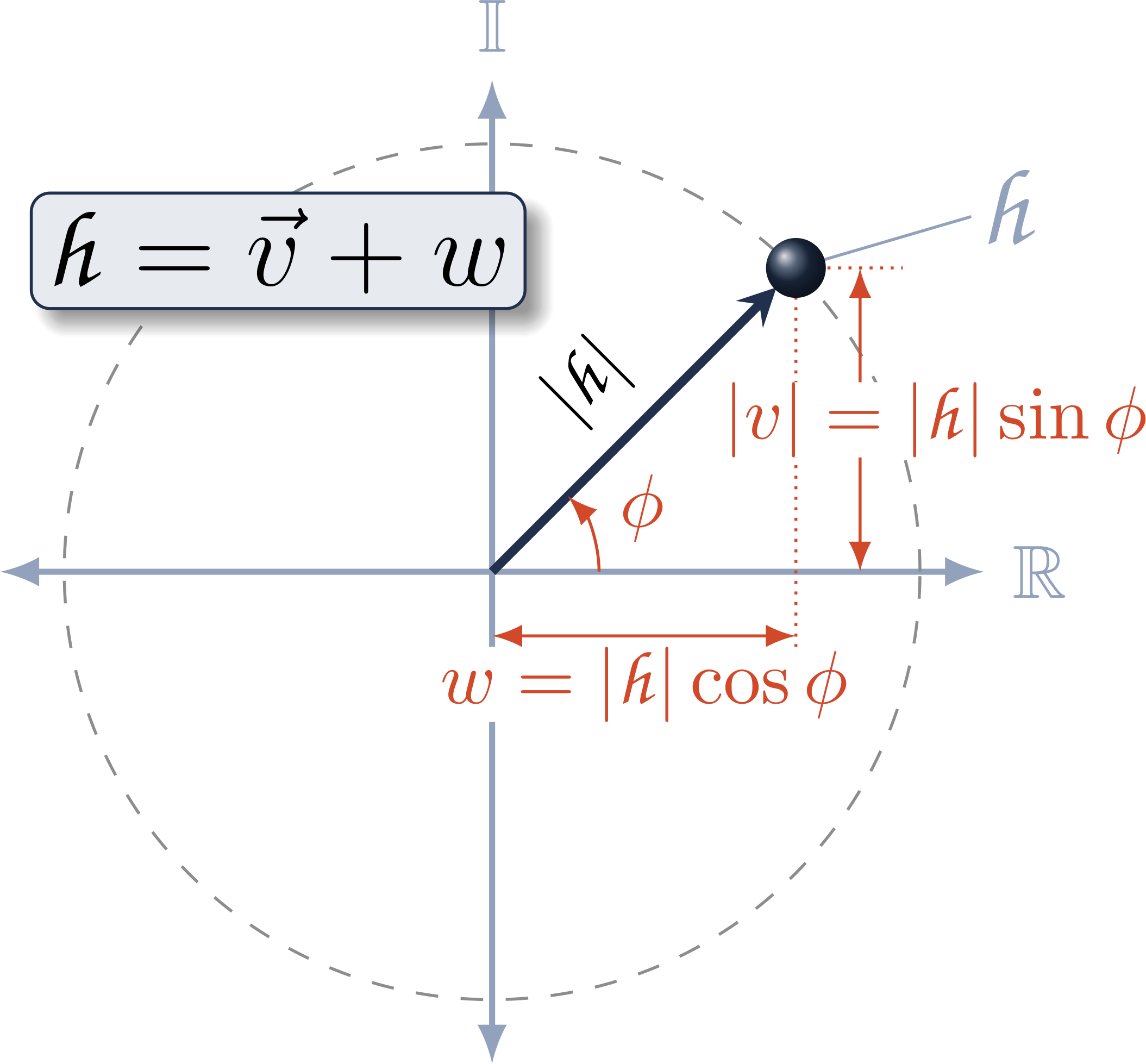

Modern approaches for robot kinematics employ the product of exponentials formulation, represented using homogeneous transformation matrices. The unit dual quaternions are an established alternative representation; however, their use presents certain challenges: the dual quaternion exponential and logarithm contain a zero-angle singularity, and many common operations are less efficient using dual quaternions than with matrices. We present a new derivation of the dual quaternion exponential and logarithm that removes the singularity, and we show that an implicit representation of dual quaternions is more efficient than transformation matrices for common kinematics operations. This work offers a practical connection between dual quaternions and modern exponential coordinates, demonstrating that a dual quaternion-based approach provides a more computationally-efficient alternative to matrix-based representations for many operations in robot kinematics.

We present a method for Cartesian workspace control of a robot manipulator that enforces joint-level acceleration, velocity, and position constraints using linear optimization. This method is robust to kinematic singularities. On redundant manipulators, we avoid poor configurations near joint limits by including a maximum permissible velocity term to center each joint within its limits. Compared to the baseline Jacobian damped least-squares method of workspace control, this new approach honors kinematic limits, ensuring physically realizable control inputs and providing smoother motion of the robot

We generate workspace trajectories for multiple waypoints, such that each trajectory segment has constant-axis rotation. This improves on the "textbook" approaches which are point-to-point, e.g., SLERP, or take indirect paths, e.g. axis-angle blends. We derive this approach by blending subsequent spherical linear interpolation phases, computing interpolation parameters so that rotational velocity is continuous.

We show that accurate, visually-guided manipulation is possible without static camera registration. Here, we register the camera online, converging in seconds, by visually tracking features on the robot and filtering the result. This handles cases such as perturbed camera positions, wear and tear on camera mounts, and even a camera held by a human.

Motion Grammars model robot policies as context-free grammars. This is a useful intermediate representation that enables a variety of policy generation, analysis, and software synthesis techniques.

We demonstrate the motion grammar through the physical human-robot games of Yamakuzushi and Chess and analyze the theoretical capabilities and guarantees of this approach.

We synthesize software for speed-controlled robot walking from the mathematical system model using supervisory control of a context-free Motion Grammar. First, we use Human-Inspired control to identify parameters for fixed speed walking and for transitions between fixed speeds, guaranteeing dynamic stability. Next, we build a Motion Grammar representing the discrete-time control for this set of speeds. Then, we synthesize C code from this grammar and generate supervisors online to achieve desired walking speeds, guaranteeing correctness of discrete computation. Finally, we demonstrate this approach on the Aldebaran NAO, showing stable walking transitions with dynamically selected speeds.

We pose a learning-from-demonstration scenario as grammatical inference. First, we perform a visual analysis to extract a task description from human demonstration, then we transfer the results to a simulated robot.

To produce correctly operating robotic systems, we need a way to modify the system dynamics to achieve the desired behavior. To help automate the derivation of correctly operating controlled systems, we introduce the Motion Grammar Calculus, a set of rewrite rules for Context-Free Hybrid Systems based on the Motion Grammar.

A multi-process software design increases robustness by isolating errors to a single process, allowing the rest of the system to continue operating. Ach is a new inter-process communication library that is highly efficient – outperforming Linux sockets – and formally verified. It operates either in userspace via POSIX shared memory or as a Linux kernel module, enabling multiplexing via the select()/poll()/epoll() calls.